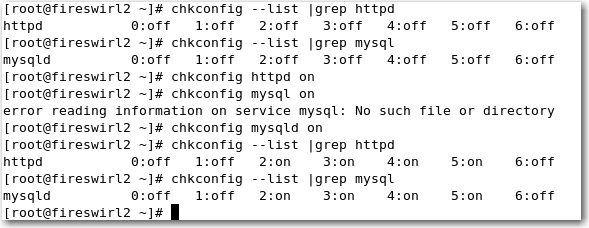

Start Linux Service Automatically During Reboot

I thought this may be useful for some as I’ve encountered this problem last night.

I thought this may be useful for some as I’ve encountered this problem last night.

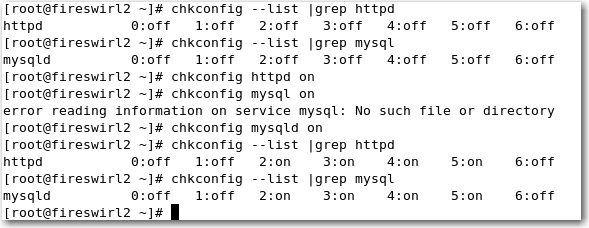

I googled around and couldn’t find any thing matching the error message.

Checked vCenter physical DVD-Rom (Drive Z:) is closed BUT NO CD/DVD, all VM does not have anything attached to this phyical DVD-Rom.

I am pretty sure this error message is related to starting of the vCenter server instead of the individual VM, somehow VC is trying to communicate with the physical DVD-Rom.

Anyone?

I’ve encountered a small problem when installing Chinese Language Pack (Simplified Chinese) on Windows Server 2008 R2 SP1. Basically the downloaded exe won’t install, later I found out you need the exact lang. pack for your exact version of W2K8 (like SP1, SP2, R2, R2 SP2), for me, it’s R2 SP1, so all you need to do is to download the correct version and simply install.

Cool thing is you can change the Windows UI to Chinese completely now. No wonder there are users from Mainland using W2K8/W2K12 as their desktop OS. (ie, a SLP thing) ![]()

Update Feb-20

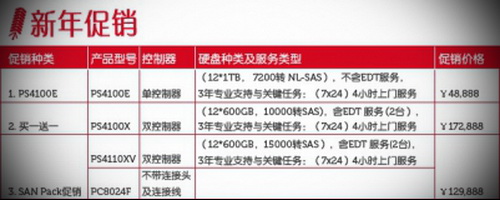

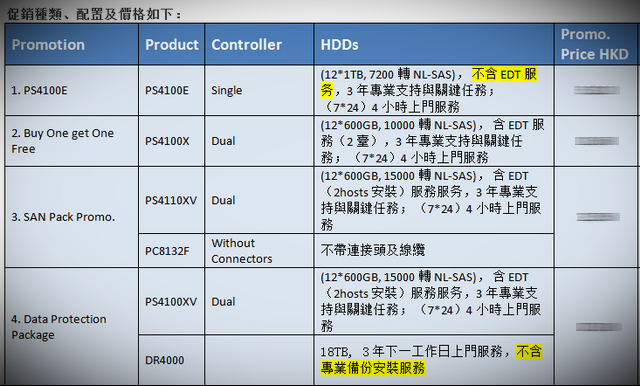

Just received the latest promotion for Hong Kong customers, well, not exactly attractive as I don’t want any series E, option 3 & 4 has to buy something I don’t need.

This only leaves with option 2, but it’s Near-Line SAS, which is different from SAS (as NL-SAS is basically a merging of a SATA disk with a SAS connector), so Nope I don’t want any SATA, same reason as for series E.

Hey, somehow I think Option 2 is a cheat!

How SAS, Near Line (NL) SAS, and SATA disks compare

By Scott Lowe

February 7, 2012, 10:20 AM PSTTakeaway: Scott Lowe breaks down the differences in reliability and performance between SAS, Near-Line SAS, and SATA drives.

When you buy a server or storage array these days, you often have the choice between three different kinds of hard drives: Serial Attached SCSI (SAS), Near Line SAS (NL-SAS) and Serial ATA (SATA). Yes, there are other kinds of drives, such as Fibre Channel, but I’m focusing this article on the SAS/SATA question. Further, even though solid-state disks (SSD) can have a SAS or SATA interface, I’m not focused here on SSDs. I’m focusing solely on the devices that spin really, really fast and on which most of the world’s data resides.So, what is the real difference between SAS, NL-SAS and SATA disks? Well, to be cryptic, there are a lot of differences, but I think you’ll find some surprising similarities, too. With that, let’s dig in!

SAS

SAS disks have replaced older SCSI disks to become the standard in enterprise-grade storage. Of the three kinds of disks, they are the most reliable, maintain their performance under more difficult conditions, and perform much better than either NL-SAS or SATA disks.

In reliability, SAS disks are an order of magnitude safer than either NL-SAS or SATA disks. This metric is measured in bit error rate (BER), or how often bit errors may occur on the media. With SAS disks, the BER is generally 1 in 10^16 bits. Read differently, that means you may see one bit error out of every 10,000,000,000,000,000 (10 quadrillion) bits. By comparison, SATA disks have a BER of 1 in 10^15 (1,000,000,000,000,000 or 1 quadrillion). Although this does make it seem that SATA disks are pretty reliable, when it comes to absolute data protection, that factor of 10 can be a big deal.

SAS disks are also built to more exacting standards than other types of disks. SAS disks have a mean time between failure of 1.6 million hours compared to 1.2 million hours for SATA. Now, these are also big numbers – 1.2 million hours is about 136 years and 1.6 million hours is about 182 years. However, bear in mind that this is a mean. There will be outliers and that’s where SAS’s increased reliability makes it much more palatable.

SAS disks/controller pairs also have a multitude of additional commands that control the disks and that make SAS a more efficient choice than SATA. I’m not going to go into great detail about these commands, but will do so in a future article.

NL-SAS

NL-SAS is a relative newcomer to the storage game, but if you understand SATA and SAS, you already know everything you need to know about NL-SAS. You see, NL-SAS is basically a merging of a SATA disk with a SAS connector. From Wikipedia: “NL-SAS drives are enterprise SATA drives with a SAS interface, head, media, and rotational speed of traditional enterprise-class SATA drives with the fully capable SAS interface typical for classic SAS drives.”

There are two items of import in that sentence: “enterprise SATA drives” and “fully capable SAS interface”. In short, an NL-SAS disk is a bunch of spinning SATA platters with the native command set of SAS. While these disks will never perform as well as SAS thanks to their lower rotational rate, they do provide all of the enterprise features that come with SAS, including enterprise command queuing, concurrent data channels, and multiple host support.

Enterprise/tagged command queuing. Simultaneously coordinates multiple sets of storage instructions by reordering them at the storage controller level so that they’re delivered to the disk in an efficient way.

Concurrent data channels. SAS includes multiple full-duplex data channels, which provides for faster throughout of data.

Multiple host support. A single SAS disk can be controlled by multiple hosts without need of an expander.However, on the reliability spectrum, don’t be fooled by the acronym “SAS” appearing in the product name. NL-SAS disks have the same reliability metrics as SATA disks – BER of 1 in 10^15 and MTBF of 1.2 million hours. So, if you’re thinking of buying NL-SAS disks because SAS disks have better reliability than SATA disks, rethink. If reliability is job #1, then NL-SAS is not your answer.

On the performance scale, NL-SAS won’t be much better than SATA, either. Given their SATA underpinning, NL-SAS disks rotate at speeds of 7200 RPM… the same as most SATA disks, although there are some SATA drives that operate at 10K RPM.

It seems like there’s not much benefit to the NL-SAS story. However, bear in mind that this is a SATA disk with a SAS interface and, with that interface comes a number of benefits, some of which I briefly mentioned earlier. These features allow manufacturers to significantly simplify their products.

SATA

Lowest on the spectrum is the SATA disk. Although it doesn’t perform as well as SAS and doesn’t have some of the enterprise benefits of NL-SAS, SATA disks remain a vital component in any organization’s storage system, particularly for common low-tier, mass storage needs.

When you’re buying SATA storage, your primary metric is more than likely to be cost per TB and that’s as it should be. SAS disks are designed for performance, which is why they’re available in 10K and 15K RPM speeds and provide significant IOPS per physical disk. With SAS, although space is important, the cost per IOPS is generally just as, if not more, important. This is why many organizations are willing to buy speedier SAS disks even though it means buying many more disks (than SATA or NL-SAS) to hit capacity needs.

Summary

At a high level, SAS and SATA are two sides of the storage coin and serve different needs — SAS for performance and SATA for capacity. Straddling the two is NL-SAS, which brings some SAS capability to SATA disks, but doesn’t bring the additional reliability found with SAS. NL-SAS helps manufacturers streamline production, and can help end users from a controller perspective, but they are not a replacement for SAS.

In an upcoming post, I’ll talk about SAS commands and why they help cement SAS’s enterprise credibility.

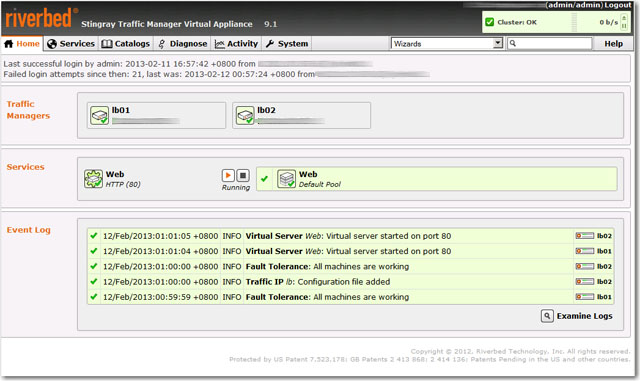

What can I say? Riverbed Stingray Traffic Manager (previously know as Zeus Traffic Manager) is probably one of the best Load Balancers I’ve tested on the market, now it’s also available as VMware OVF format.

From initial VM OVF deployment to the actual implementation of the first load balanced web sites was less than 30 minutes. Simple concept and solid performance during stress tested (concurrent over 10,000 users per seconds).

I particularly like the admin GUI, simple and intuitive, everything is self explanatory and no non-sense.

What’s best, it also comes with an integrated Application Firewall as well as Traffic Manager Cluster Mode which you can add one or more Traffic Managers to the cluster to make it HA, wow, this idea is brilliant!

I’ve even gone as far as adding the 3rd TM (on a different subnets) into the cluster, it worked somehow, but failed when creating a Traffic IP as the Traffic IP must be seen on all subnet, I think this can be easily solved using a router between the two different subnets thought. Alternatively, I think GSLB is my next option on the list, but don’t have time now, will try later.

The most magical feature is Aptimizer which transparently optimize your web page (ie, compressed, hence reduced the time for loading) without you rewrite any of the coding.

Stingray Aptimizer is what was formerly known as Website Accelerator, or WAX. It was created by New Zealand software developer Aptimize to rejigger and accelerate web pages running on IIS or Apache web servers as well as pages stored on content delivery networks from Akamai Technologies.

Aptimizer analyzes how web pages load and reorganizes the content so a web browser doesn’t have to make so many roundtrips back to the web server to load a page. Because there are dozens of elements on a typical page, reconfiguring the web pages on the fly and storing the more efficient web page in cache on the web server can reduce page load times by a factor of four. The beauty is that this optimization does not change the web applications one bit, so you don’t have to modify your code.

The only complain is probably the cost, which is prohibitive to implement for the average SMBs, but since Riverbed is really targeting enterprise market, so I guess they don’t really care about the little ones after all. ![]()

Oh…there is a free and fully functional with limited features (10 request per second) Developer edition, don’t forget to try it out.

This is the latest recommendation from Dell Equallogic, found in Firmware 6.0 Release Note:

Beginning with this release, the Group Manager GUI no longer includes the option for configuring a group member to use RAID 5 for its RAID policy. RAID 5 carries higher risks of encountering an uncorrectable drive error during a rebuild, and therefore does not offer optimal data protection. Consequently, Dell recommends against using RAID 5 for any business-critical data.

RAID 5 may still be required for certain applications, depending on performance and data availability require-ments. To allow for these scenarios, you may still use the CLI to configure a group member to use RAID 5.

For a complete discussion of RAID policies on PS Series systems, review the Dell Technical Report titled PS Series Storage Arrays: Choosing a Member RAID Policy, which can be downloaded from either of the following locations:

• www.equallogic.com/resourcecenter/documentcenter.aspx

• en.community.dell.com/techcenter/storage/w/wiki/equallogic-tech-reports.aspx

In addition, Dell recommends against using RAID configurations that do not use spare drives. You should convert all group member that are using a no-spares RAID policy to a policy that uses spare drives.

Known Issues and Limitations

The following restrictions and known issues apply to this version of the PS Series Firmware. For information about known issues and restrictions from other releases, see the Release Notes for those versions. For issues about Dell EqualLogic FS Series Appliances, refer to the Dell EqualLogic FS Series Appliances Release Notes. For issues and limitations pertaining to host operating systems and iSCSI initiators, refer to the iSCSI Initiator and Operating System Considerations document.

RAID Conversion From No-Spares To Spares Does Not Work

RAID conversion from a no-spares policy to a spares policy appears to work, but it actually converts to no-spares, resulting in no change. (Funny)

I am pretty happy with SANHQ 2.2, HIT MS 4 and HIT VMware 3.1, and there aren’t much new features I need, so I choose to delay the upgrade for the time being.

Furthermore, there are two interesting videos presented by Dell EQL User Group in Taiwan (in Chinese), the following new features were intensively mentioned as well. (Part 1 and Part 2)

SAN HQ 2.5 Announcement

Dell announces Host Integration Tools for Microsoft 4.5

Dell Announces Host Integration Tools for VMware 3.5

Note: HIT for VMware v3.1.1, and earlier, is not compatible with Equallogic Firmware Version, 6.0, of the PS Series Firmware. A later version must be installed prior to upgrading to Version 6.0, for compatibility.

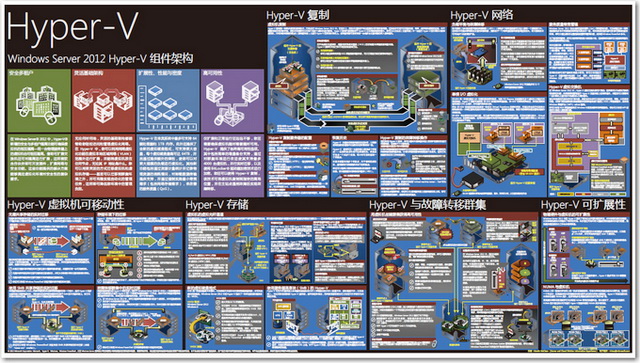

This huge PDF poster shows some of the advanced features that VMware doesn’t have, even I am not a Microsoft visualization guy, but it’s good to know what competitor is up to.

“Keep It SIMPLE!”

I was once told by a data center veteran about 10 years ago.

This has been deeply embedded in my network/storage/security design methodology ever since and it is still holding the truth even today. It saved me from many pitfalls and allowed me to quickly track down where exactly the problems were.

It is especially true when your equipments (server/switch/storage) are growing at a fast pace and “Keep It SIMPLE!” is the only tool to save your life after all.

Important HKIX Announcement on New Charging Model

HKIX was established in April 1995, using spare resources of The Chinese University of Hong Kong. At the onset, HKIX could be offered as a free-of-charge service to the early participants with moderate network traffic. But over the years, with the growth in the demand for Internet traffic exchange and e-business, we have now grown into a critical Internet infrastructure covering 190+ autonomous systems and exchanging 250+ Gbps of data at peak time. To support such a huge network, the configuration of HKIX has also evolved from a primitive coaxial cable to Ethernet switches and to the now top-end equipment and technologies.

Obviously, it is not possible to leverage the network economy of the whole Hong Kong with virtually no cost. In 2005, we introduced the penalty-based cost-recovering model with a hope to curb abuse and to recover most of the cost. After eight years, we find that this extremely conservative model is not sustainable in front of the exponential growth in network traffic volume and growth in the demand for new connections. Together with the higher expectation of all the participants in the service, it calls for a continuous upgrade of the HKIX infrastructure in capacity and resilience. This requires substantial periodic capital investment and operating expenses. It is unfair and impossible for the university to subsidize the operation indefinitely. In fact, we have to do a major upgrade in 2013 to cope with the ever-increasing demand and to improve our resilience further with site resilience at the core.

In order to make the HKIX operation truly self-sustained in long term and to prepare for the continuous growth in the future while we could keep HKIX as a not-for-profit service, we have no choice but to implement a full-cost-recovery model, i.e. simple port charge model. This charging mechanism is actually the industry norm, which is fair and consistent for all participants.

Simple port charge model will be implemented starting 01-JAN-2013 for all new participants and new connection requests. For existing participants with no change of connections, the existing terms and conditions will not change until 30-JUN-2013. To further ease the transition, we shall still provide to each participant up to 2 free FE/GE ports in total (counting all ports at all HKIX sites) until 30-JUN-2014. After 30-JUN-2014, all ports will be chargeable.

The new port charges (applied to all HKIX sites) will be:

FE/GE: US$120/port/month (no one-time connection charge) 10GE: US$1,000/port/month (plus one-time non-refundable connection charge: LR – US$2,300; ER – US$7,000)

(Exchange Rate: US$1=HK$7.8)

We strongly believe the charges laid down are on the low side when compared to other parts of the world. Nevertheless, we shall review the charges periodically and uphold our principles of fairness and consistency.

If you have any queries regarding this new arrangement, please feel free to contact our core team at hkix-core at itsc.cuhk.edu.hk.

We thank you for your understanding and wish you’ll have a Merry Christmas and a Happy New Year.

HKIX Team